AT A GLANCE

Digital platforms, including social media, e-commerce, and gaming communities, face increasing pressure every day. More users. More content. More complexity. More risk. Content moderation is the key to protection. When done right, it makes a difference between brand success and public backlash. Ready to master the game? We have picked 10 must-know content moderation best practices for 2025. Learn how to scale smart, safe, and global. Read on.

Introduction

In 2025, content moderation is no longer just a support function but a frontline defence. What was once a quiet, back-office task has become a high-stakes, business-critical mission demanding speed, precision, and constant vigilance. Powerful forces are fuelling this shift: hyperconnectivity, the rise of deceptive AI-generated content, and tightening global regulations. Together, they have completely reshaped the landscape, turning content moderation best practices from a nice-to-have into a business necessity.

Why Content Moderation Best Practices Cannot Wait

Content moderation now sits at the core of brand protection, user safety, and legal compliance. It is more important than ever as a decisive factor in building trust and sustaining reputation in today’s dynamic and complex digital world. Companies that adapt quickly will thrive.

Those who fail to act risk being exposed and left dangerously behind. Whether you manage it in-house or outsource, now is the time to reassess your approach. Improvements and best-practice updates may be needed.

However, the mission remains the same: to protect your brand, users, and communities while maintaining compliance and ethical standards.

The figures below reveal the vast scale of digital expansion and engagement alongside the growing severity of related threats:

By February 2025, 5.56 billion people, approximately 68% of the global population, were using the internet. Northern Europe led in penetration by population share, while Eastern Asia accounted for the largest number of users worldwide. (Source: Statista)

At the same time, global cybercrime has grown sharply, with financial losses expected to reach $10.5 trillion annually by 2025, up from $3 trillion in 2015. This surge highlights the rising threat worldwide. (Source: Cybersecurity Ventures)

Key Content Moderation Challenges

Content moderation has consistently posed challenges, evolving in tandem with the digital landscape. Today, however, several issues have grown especially urgent and complex. The effort is now shaped by intensifying pressures, including more sophisticated threats, rising user expectations, and stricter regulatory demands. As a result, content governance requires sharper focus and coordinated action to keep online spaces safe, welcoming, and trustworthy. Content moderation best practices are one of the key enablers of this shift, providing structure, clarity, and consistency in the face of growing complexity.

Scale vs Control

A key driver of content moderation complexity is the rapid increase in user-generated content. Every second, millions of videos, images, posts, and comments pour into an expanding ecosystem of platforms.

This volume makes it more challenging than ever to distinguish between what is appropriate and what is harmful or misleading.

The lines are often blurred, and context can shift dramatically across languages, cultures, and formats.

Simply put, the speed and scale of content creation have far outstripped the capacity of traditional moderation methods.

New Platforms, Smarter Threats

Furthermore, today’s digital world is more crowded and competitive than ever. New social apps, forums, and interactive platforms emerge constantly, each with unique rules, formats, and audience behaviours.

At the same time, harmful content has become more evasive. Misinformation, hate speech, and harassment are often crafted to bypass detection, sometimes even generated by AI or coordinated campaigns.

This convergence of rapid platform growth and smarter threats makes modern oversight processes both vital and more demanding.

Technological and Regulatory Pressures

In addition, while advances in AI and automated moderation tools offer much-needed scalability, they also introduce risks of bias, false positives, and a lack of contextual understanding.

At the same time, regulatory frameworks worldwide are undergoing rapid evolution.

They compel platforms to navigate a complex patchwork of legal obligations, usually with significant consequences for non-compliance.

These combined pressures demand more sophisticated, transparent, and accountable approaches to content governance.

Operational and Human Realities

Beyond technology and regulation, a range of operational and human hurdles also demand attention.

First, moderators must walk a fine line between speed and fairness, reviewing content quickly enough to prevent harm but carefully enough to avoid over-censorship.

Then, the global nature of platforms adds another layer of complexity, requiring adaptation across languages, cultures, and legal systems.

On top of that, the psychological strain of viewing harmful content makes moderator well-being a serious concern. Finding, training, and retaining the right talent is becoming harder, too.

Ten Content Moderation Best Practices for 2025

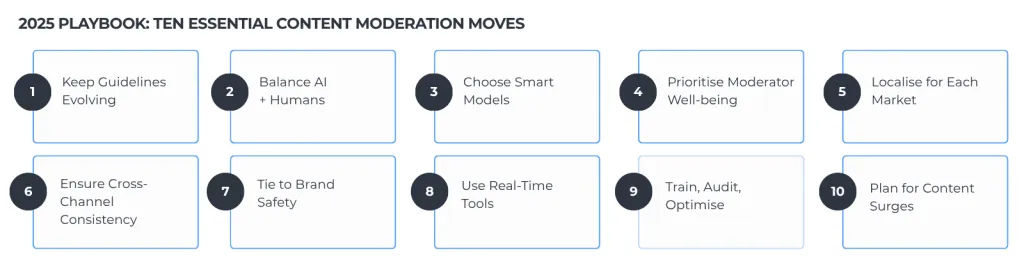

Below are ten essential content moderation best practices to help decision-makers meet the realities of 2025. They strike a balance between automation and human insight, ensuring compliance and preparing for the unexpected. Moreover, they reflect the latest industry thinking and showcase how to approach content reviewing at scale: safely, globally, and with precision.

Content moderation outsourcing is the smart, scalable solution in a world where in-house teams often struggle to compete. It delivers fast, precise, and growth-ready protection, freeing platforms from the endless grind of hiring, training, and retaining expert moderators. Furthermore, external services bring deep expertise and cutting-edge technology, harnessing advancements that evolve at a rapid pace to tackle emerging threats before they escalate.

Flexibility and Global Expertise at Scale

Diving deeper, content moderation outsourcing unlocks instant access to multilingual teams fluent in local slang, cultural nuances, and regional laws, which are key to building trust worldwide.

It offers unparalleled flexibility, letting you ramp up during viral spikes or new launches without exhausting your core staff. This agility ensures every oversight process keeps pace with the digital world’s fast evolution.

Moreover, outsourcing helps navigate evolving regulations smoothly, such as the Digital Services Act, GDPR, or local data protection regulations. This enables you to minimise the risk of brand damage, legal penalties, or user mistrust.

Cost Efficiency Meets Advanced Hybrid Solutions

Among many other benefits, outsourcing slashes overhead on recruitment, training, and infrastructure. It also enables companies to scale more quickly without compromising operational control.

Partnering with the right BPO enables you to combine AI-driven tools with human insight to identify subtle threats that machines alone miss while ensuring security at scale.

Beyond cost savings, this hybrid model sharpens accuracy and compliance. With expert support, you can quickly adapt to new regulations, languages, and content types across various markets.

Trusted providers offer 24/7 coverage and crisis-ready protocols, safeguarding your brand from risk and downtime so you can focus fully on growth and innovation.

How to Choose the Right Content Moderation Outsourcing Partner

Choosing the right content moderation outsourcing partner is a strategic move that directly impacts brand safety, user trust, and regulatory compliance. This decision goes far beyond cost saving. It is about finding a provider who can combine speed, scale, and sensitivity while upholding your values and protecting your digital community. The right BPO not only filters content but also enhances it. They help you build resilience, navigate complexity, and maintain ethical standards in real-time.

Domain Expertise and Adaptability

Prioritise partners with proven experience in your industry. Moderating content for gaming platforms, social media, e-commerce, or healthcare each demands specific contextual understanding and risk awareness. A skilled BPO offers tailored workflows, cultural insight, and nuanced decision-making, which are essential for flagging misinformation, hate speech, or graphic materials with precision.

Transparent Processes and Quality Control

Effective moderation requires visibility. Choose a vendor who offers impactful content moderation best practices, clear escalation paths, defined KPIs, and real-time reporting. Look for continuous quality checks, regular performance reviews, and a commitment to training and calibration. Transparency is a critical element of collaboration, safeguarding brand reputation and regulatory compliance.

Scalable Coverage with Human-AI Balance

The best providers must offer 24/7 multilingual coverage, leveraging automation to handle high volumes and a human touch to manage nuances. This hybrid approach enables you to scale processes without compromising empathy or context. Whether you are growing globally or facing sudden surges, a BPO with the right reach and agility ensures your users stay protected around the clock and across every channel.

Conclusion

In 2025 and beyond, content moderation will no longer be a reactive process. Too much is at stake to do too little. Continuous improvement and adaptation are essential for brand survival. These ten content moderation best practices offer clear guidance and direction to help you stay ahead. By balancing automation, human insight, and agility, they address misinformation, cultural nuances, and regulatory demands. Digital platforms that adopt flexible frameworks, prioritise well-being, and leverage strategic outsourcing will be best positioned to build safer, stronger communities.

FAQ Section

1. Why is content moderation considered a core business function in 2025?

Because digital platforms now operate in an environment of constant scrutiny, content moderation has evolved from a behind-the-scenes task into a critical brand defence mechanism. With reputational, legal, and safety risks on the rise, maintaining clean, compliant, and user-safe environments is now a business imperative.

2. Can automation alone handle modern content moderation needs?

Not entirely. While AI tools can rapidly screen large volumes of content, they often struggle to grasp context, irony, or cultural nuance. A balanced approach, combining machine efficiency with human discernment, remains essential to ensure both accuracy and fairness.

3. What are the biggest risks of not updating moderation strategies regularly?

Outdated frameworks can lead to regulatory violations, reputational harm, and unchecked harmful content. Given the pace of platform evolution and rising threats, such as deepfakes and coordinated misinformation, stagnation exposes companies to avoidable crises.

4. How does outsourcing content moderation benefit fast-growing platforms?

Outsourcing offers flexible, on-demand scalability and instant access to multilingual, culturally aware teams. It reduces the burden of recruitment and training while providing around-the-clock oversight. This agility is particularly valuable during rapid user growth or viral content spikes.

5. What qualities should companies look for in a content moderation outsourcing partner?

Pricing can vary widely, including per-ticket charges, hourly rates, per-agent fees, or flat monthly costs. Prices depend on service complexity, language needs, delivery location, and volume of support interactions, allowing businesses to choose models that fit their operational demands and budgets.